Metadata Conditioned Large Language Models for Localization

George Mason University

Abstract

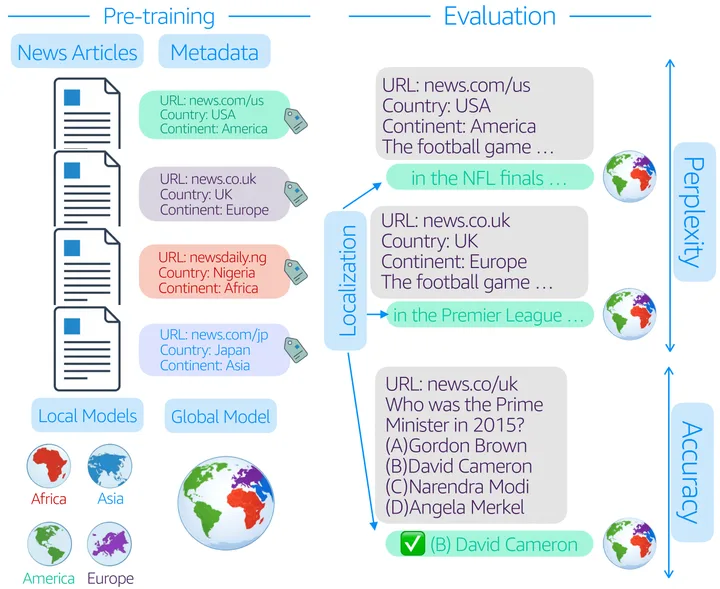

Large language models are typically trained by treating text as a single global distribution, often resulting in geographically homogenized behavior. We study metadata conditioning as a lightweight approach for localization, pre-training 31 models (at 0.5B and 1B parameter scales) from scratch on large-scale English news data annotated with verified URLs, country tags, and continent tags, covering 4 continents and 17 countries. Across four controlled experiments, we show that metadata conditioning consistently improves in-region performance without sacrificing cross-region generalization, enables global models to recover localization comparable to region-specific models, and improves learning efficiency. Our ablation studies demonstrate that URL-level metadata alone captures much of the geographic signal, while balanced regional data coverage remains essential, as metadata cannot fully compensate for missing regions. Finally, we introduce a downstream benchmark of 800 localized news MCQs and show that after instruction tuning, metadata conditioned global models achieve accuracy comparable to LLaMA-3.2-1B-Instruct, despite being trained on substantially less data. Together, these results establish metadata conditioning as a practical and compute-efficient approach for localization of language models.

Highlights

BibTeX

@misc{mukherjee2026metadata,

title = {Metadata Conditioned Large Language Models for Localization},

author = {Mukherjee, Anjishnu and Zhu, Ziwei and Anastasopoulos, Antonios},

year = {2026},

eprint = {2601.15236},

archivePrefix = {arXiv},

primaryClass = {cs.CL},

url = {https://arxiv.org/abs/2601.15236}

}